AI Is Making Your Best Developers Slower. That’s Not a Typo.

In July 2025, METR published a study that should have made every CTO rethink their AI strategy: experienced developers using AI coding assistants completed tasks 19% slower than those working without them.

Read that again. Not junior developers. Not interns fumbling with prompts. Experienced engineers—the ones you’re paying $180K+ or sourcing from top-tier offshore partners—got slower when you handed them AI tools.

The Jellyfish engineering management report confirmed the pattern from another angle: junior developers using AI generated more code, but also produced significantly more bugs. More output, worse outcomes.

If your reaction is “well, we just rolled out Copilot across our offshore team and things seem fine,” you might want to look closer. Because the 19% slowdown isn’t a bug in AI—it’s a bug in how teams adopt it.

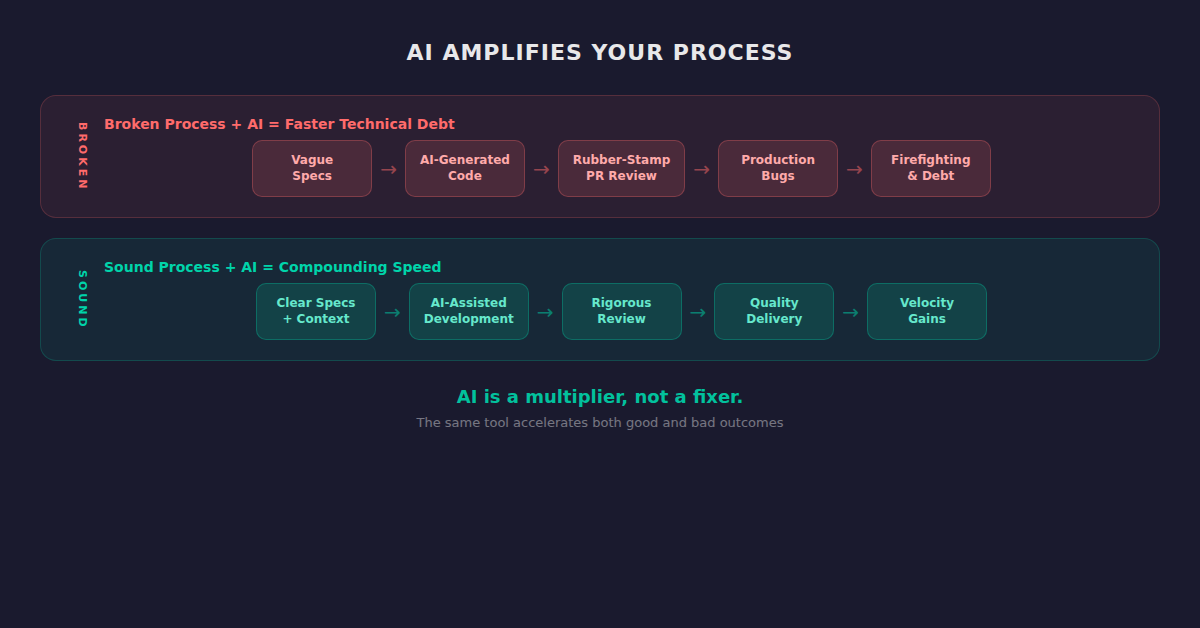

The Real Problem: Bolting AI Onto Broken Processes

Here’s what’s actually happening at most companies in early 2026:

- CTO reads about AI productivity gains

- Company buys GitHub Copilot or Cursor licenses for everyone

- Offshore team starts using AI to generate code faster

- PR review queues explode because AI-generated code looks right but carries subtle issues

- Senior onshore engineers burn out policing AI-assisted PRs

- Technical debt compounds silently

- Six months later, the “AI-powered” team is slower than before

Sound familiar? If you’ve spent time on r/ExperiencedDevs or talked to CTOs at Series B+ companies, you know this pattern is everywhere. One VP of Product described the core issue: “Tech lead overstretched with 12 devs, PO gatekeeps everything, and the pace is still frustrating.”

The problem isn’t AI. The problem is that most organizations are bolting AI tools onto offshore processes that were already broken—unclear ownership, insufficient code review standards, no shared architectural context, and management overhead that erases cost savings. (We quantified exactly how much that overhead costs in The $150K Hidden Tax—spoiler: it’s worse than you think.)

AI doesn’t fix broken processes. It accelerates them. In both directions.

The 5 Pillars of AI-Augmented Offshore Teams

After analyzing how high-performing distributed teams actually succeed with AI—not the marketing pitch, the engineering reality—five patterns emerge consistently. Miss any one, and you get the METR result. Nail all five, and you get the speed gains everyone promised.

Pillar 1: Standardized AI Tooling With Guardrails

The worst thing you can do is let every developer pick their own AI tools and use them however they want. You’ll end up with five different coding assistants, three prompt styles, and zero consistency in the output.

What works:

- Single AI toolchain across onshore and offshore teams (same IDE extensions, same models, same configuration)

- Approved prompt libraries for common patterns—React component generation, API endpoint scaffolding, test creation

- Explicit rules for what AI should and shouldn’t generate (business logic: no. Boilerplate CRUD: yes. Node.js middleware: with templates only)

- Model-specific guidelines—Claude, GPT-4, and Copilot produce different patterns. Pick one primary, standardize around it

The goal isn’t to restrict developers. It’s to make the AI output predictable enough that code review doesn’t become a nightmare.

Pillar 2: Context-Rich Development Environments

The METR study’s slowdown makes sense when you understand why: experienced developers spent more time verifying AI output than they saved generating it. The AI didn’t have enough context to produce correct code on the first pass.

The fix is context injection:

- Architecture Decision Records (ADRs) fed into AI context windows so generated code respects existing patterns

- Codebase-aware AI configuration (Cursor’s .cursorrules, Copilot workspace settings) tuned per repository

- Living style guides that AI tools can reference—not a wiki nobody reads, but machine-readable standards

- Domain glossaries so AI-generated code uses the right terminology (critical for offshore teams working across business domains)

When AI tools understand your architecture, your naming conventions, and your domain language, the 19% slowdown flips. Developers spend less time correcting and more time building.

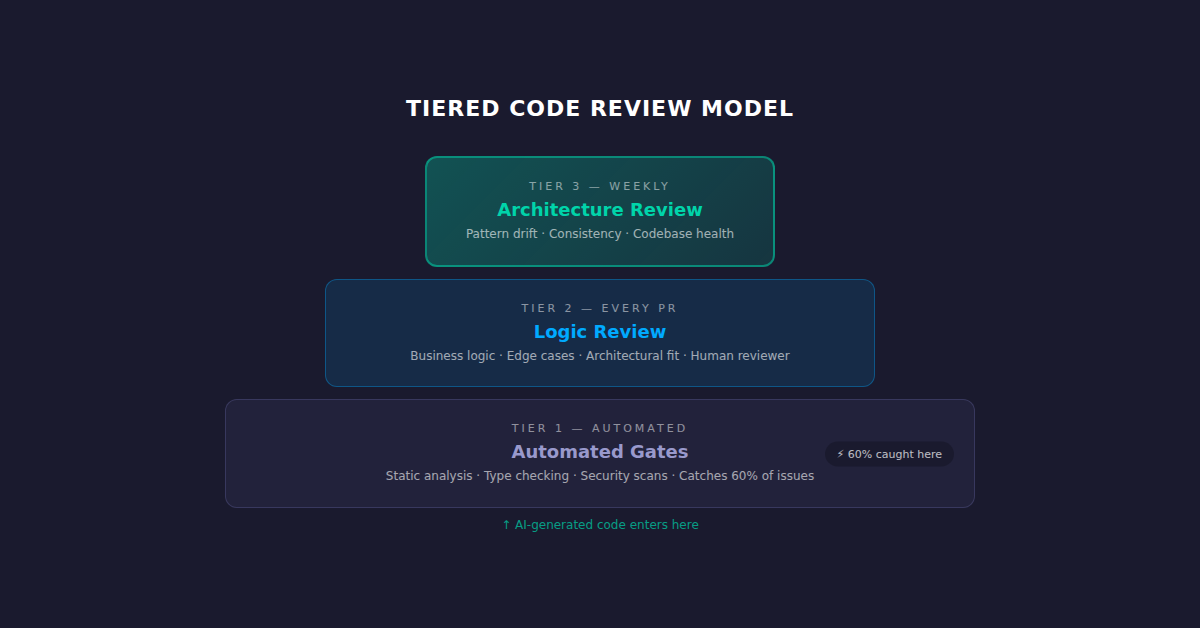

Pillar 3: Tiered Code Review for AI-Assisted Output

Traditional code review assumes a human wrote every line. AI-assisted code requires a different review posture—not more review, but differently structured review.

The tiered model:

- Tier 1 — Automated gates: AI-generated code runs through static analysis, type checking, and security scanning before it hits a human reviewer. Catch the 60% of issues that are mechanical.

- Tier 2 — Logic review: Human reviewer focuses exclusively on business logic, edge cases, and architectural fit. Skip the formatting debates—AI and linters handle that.

- Tier 3 — Architecture review: Weekly or bi-weekly review of how AI-generated patterns are evolving across the codebase. Are we drifting? Is the AI introducing subtle consistency issues?

This structure is especially critical with offshore teams, where the reviewer and the author may have 8+ hours of timezone gap. You can’t afford a three-round review cycle when each round costs a calendar day. Pairing this tiered model with a dedicated QA testing strategy catches the issues that even good code review misses.

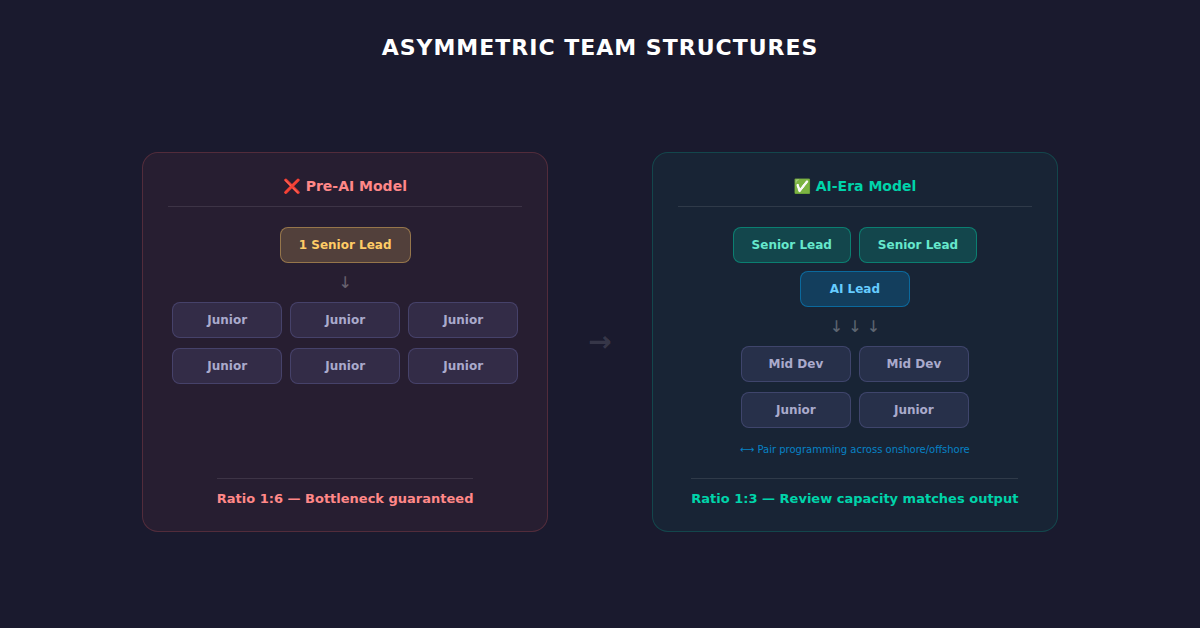

Pillar 4: Asymmetric Team Structures

The old offshore model—lots of junior developers supervised by one or two seniors—breaks catastrophically with AI. Junior developers armed with AI produce more code that needs more senior review. The bottleneck inverts.

The AI-era team structure:

- Higher senior-to-junior ratio than pre-AI teams (1:3 instead of 1:6)

- “AI Lead” role — a senior developer whose job is maintaining prompt libraries, AI tool configuration, and reviewing AI-generated patterns

- Pair programming sessions where onshore and offshore developers co-pilot with AI, building shared context that no documentation can replace

- Rotating architecture ownership so no single senior becomes the bottleneck for all AI-output review

This is where custom software development partnerships earn their premium. The team that shows up matters more than the tools they use. (If you’re currently evaluating partners, our 15-Point Offshore Partner Evaluation Scorecard now needs a sixth section: AI workflow maturity.)

Pillar 5: Feedback Loops That Actually Close

Most offshore engagements have a feedback problem: issues are identified, logged in Jira, and forgotten. With AI-generated code, the feedback loop needs to be tighter and more specific.

What closing the loop looks like:

- AI output scoring — Track what percentage of AI-generated code survives review unchanged. If it’s below 70%, your context injection (Pillar 2) needs work.

- Prompt retrospectives — When AI output consistently misses the mark for a specific pattern, update the prompt library. This is a team activity, not individual troubleshooting.

- Codebase health metrics — Cyclomatic complexity, dependency depth, and test coverage tracked weekly, not quarterly.

- Developer satisfaction signals — If your senior engineers dread reviewing AI-assisted PRs, your process is broken regardless of what the velocity metrics say.

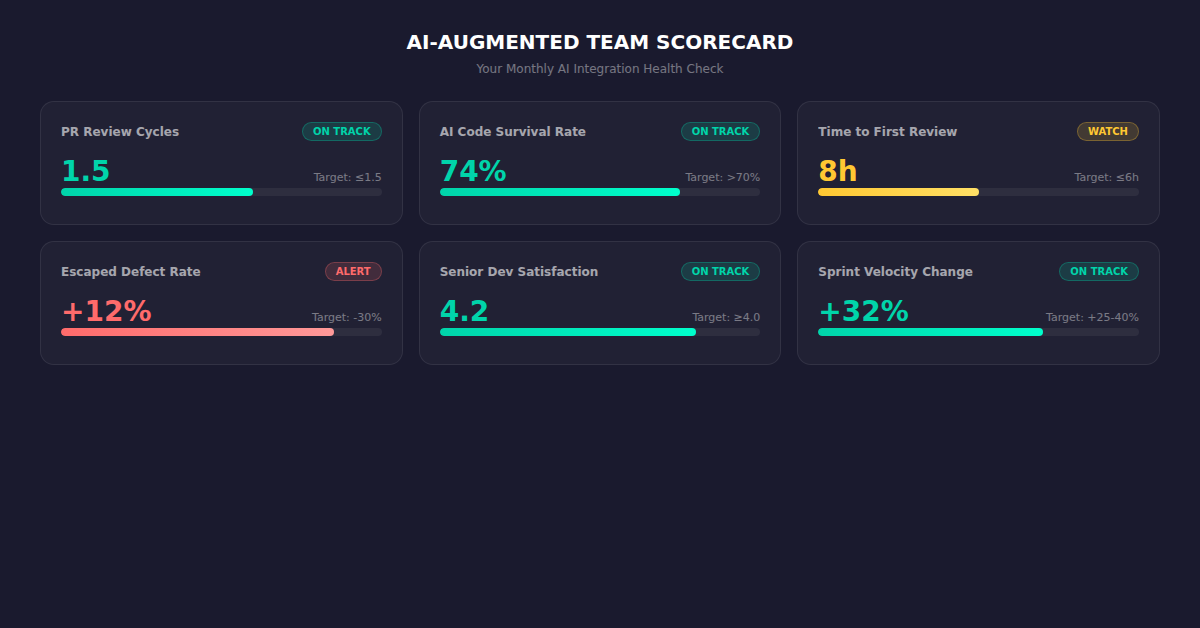

How to Know It’s Working: The Metrics That Matter

Forget “lines of code” and “number of commits.” AI makes those vanity metrics even more meaningless than they already were. Track these instead:

| Metric | Pre-AI Baseline | Target With AI | Red Flag |

|---|---|---|---|

| PR review cycles to merge | 2.5 | 1.5 | >3.0 |

| AI-generated code survival rate | N/A | >70% | <50% |

| Time-to-first-review (hours) | 12 | 6 | >18 |

| Escaped defect rate | Baseline | -30% | Any increase |

| Senior dev satisfaction (1-5) | Baseline | ≥4.0 | <3.0 |

| Sprint velocity (story points) | Baseline | +25-40% | <Baseline |

The sprint velocity increase should be a trailing indicator, not a leading one. If velocity goes up but review cycles and defect rates also increase, you’re just generating technical debt faster.

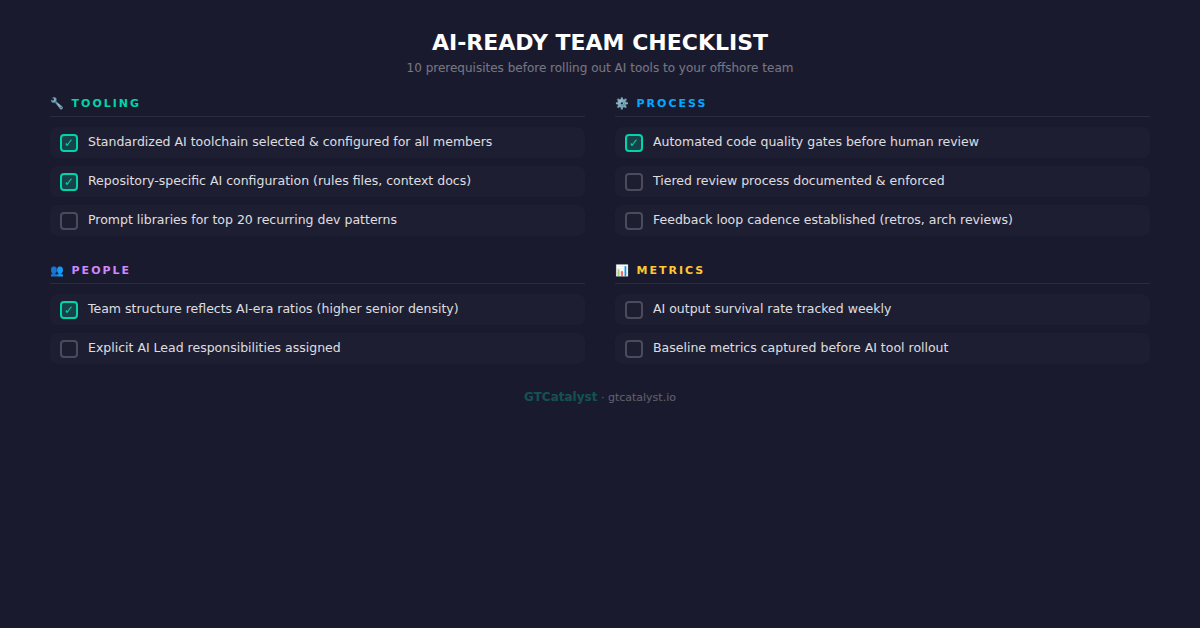

The “AI-Ready” Offshore Team Checklist

Before your next offshore engagement—or before introducing AI tools to your existing team—validate these:

- ☐ Standardized AI toolchain selected and configured for all team members

- ☐ Repository-specific AI configuration (rules files, context documents) created and maintained

- ☐ Prompt libraries for the top 20 recurring development patterns in your stack

- ☐ Automated code quality gates running before human review

- ☐ Tiered review process documented and enforced

- ☐ Team structure reflects AI-era ratios (higher senior density)

- ☐ Explicit AI Lead responsibilities assigned

- ☐ AI output survival rate tracked weekly

- ☐ Feedback loop cadence established (prompt retros, architecture reviews)

- ☐ Baseline metrics captured before AI tool rollout

The Teams That Win in 2026 Aren’t the Ones With the Best AI Tools

They’re the ones with the best processes around AI tools.

The METR study didn’t prove that AI is useless for software development. It proved that AI without workflow design makes experienced developers slower. That’s a process problem, not a technology problem.

The CTOs who will pull ahead this year are the ones treating AI integration as a systems design challenge—standardizing tooling, injecting context, restructuring review processes, rebalancing team compositions, and closing feedback loops.

The ones who’ll fall behind are the ones who bought Copilot licenses, emailed the offshore team a “best practices” PDF, and called it transformation.

If you’re evaluating offshore development partners in 2026, the question isn’t “do your developers use AI?” Everyone uses AI. The question is: “Show me your AI workflow architecture.”

The answer to that question will tell you everything you need to know.

Ready to Build an AI-Augmented Team That Actually Delivers?

GTCatalyst builds custom software development teams with AI-augmented workflows baked into the engagement model—not bolted on after kickoff. Our React and Node.js teams ship with standardized AI toolchains, tiered review processes, and the metrics framework to prove it’s working. Our dedicated QA testing practice ensures AI-generated code meets the same quality bar as human-written code.

Talk to our team about your AI-augmented offshore strategy →