Most companies evaluate offshore partners with gut feelings and sales pitches. Here’s the framework that actually predicts success.

Reading time: 8 minutes

📑 In This Article

Why Most Offshore Evaluations Fail

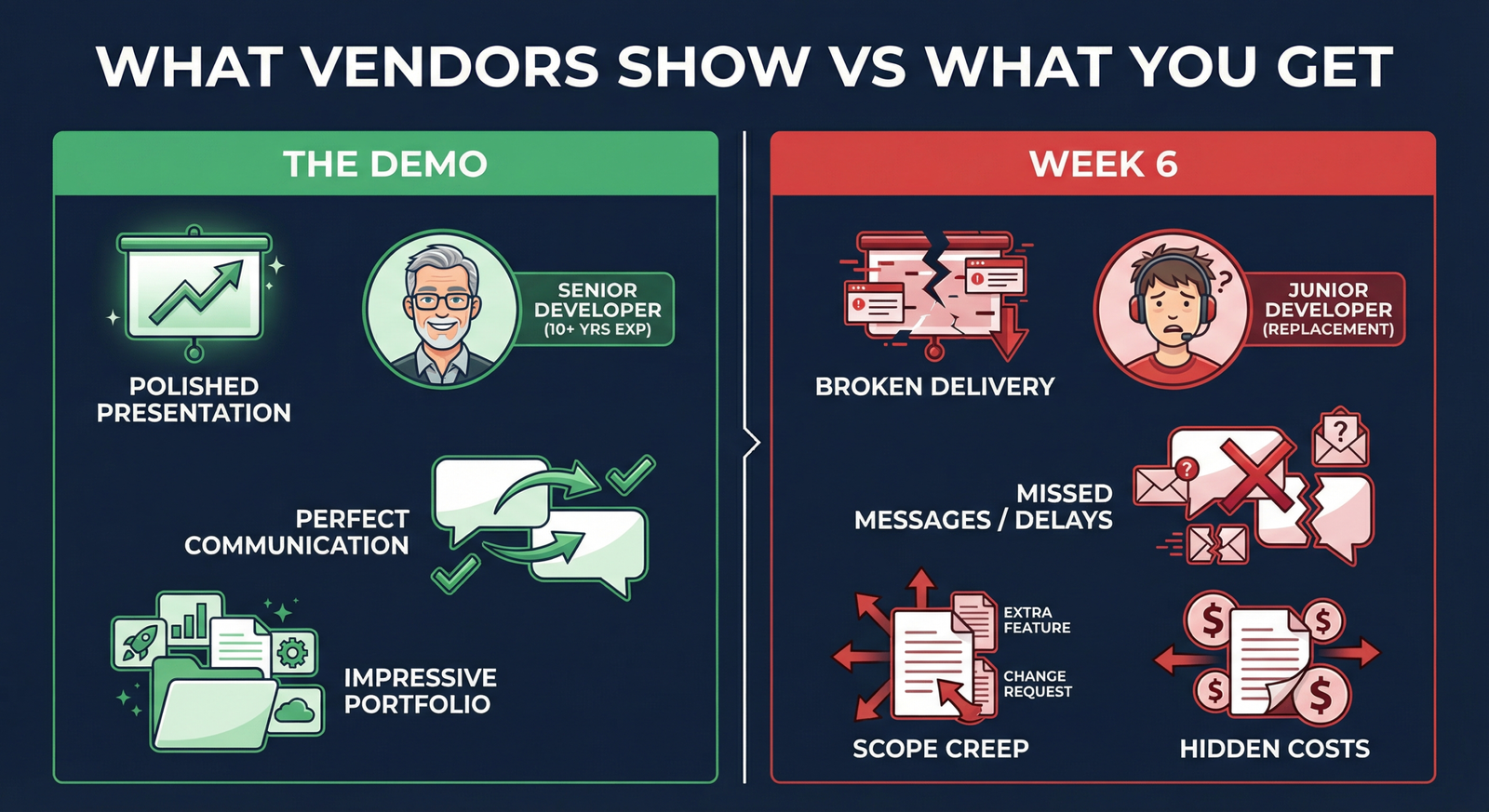

You’ve seen the demo. The developers were sharp, English was flawless, and the portfolio looked impressive. Six weeks later, you’re working with entirely different people, communication has collapsed, and your timeline is toast.

Traditional evaluations focus on sales demos, curated portfolios, and cherry-picked references — none of which predict actual delivery.

The Cost of Getting It Wrong

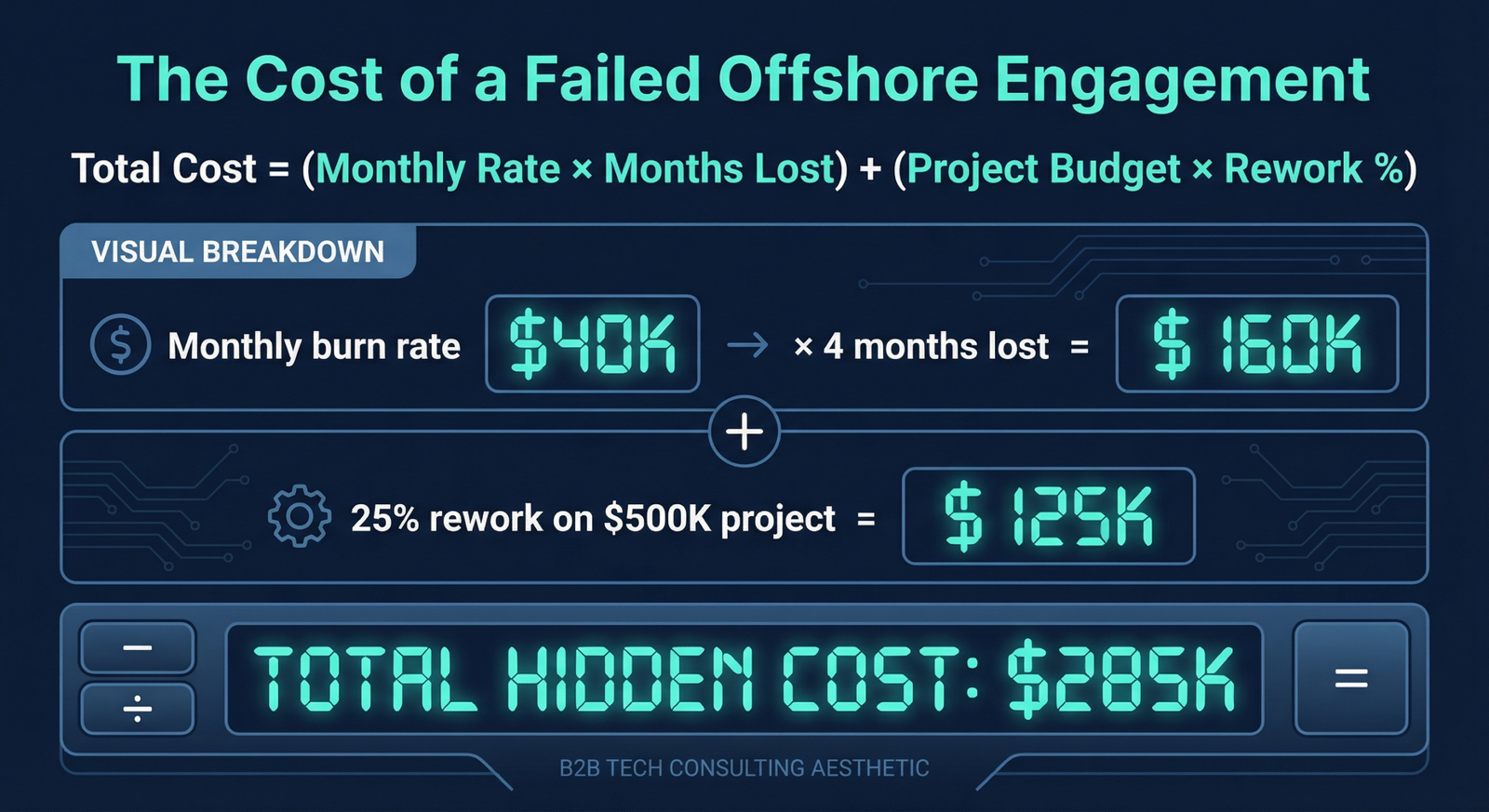

A failed offshore engagement isn’t just frustrating — it’s expensive.

If you’re spending $50K/month and lose 3-4 months to a failed engagement plus 25% rework, you’re looking at $275K+ in hidden costs.

The 15-Point Evaluation Framework

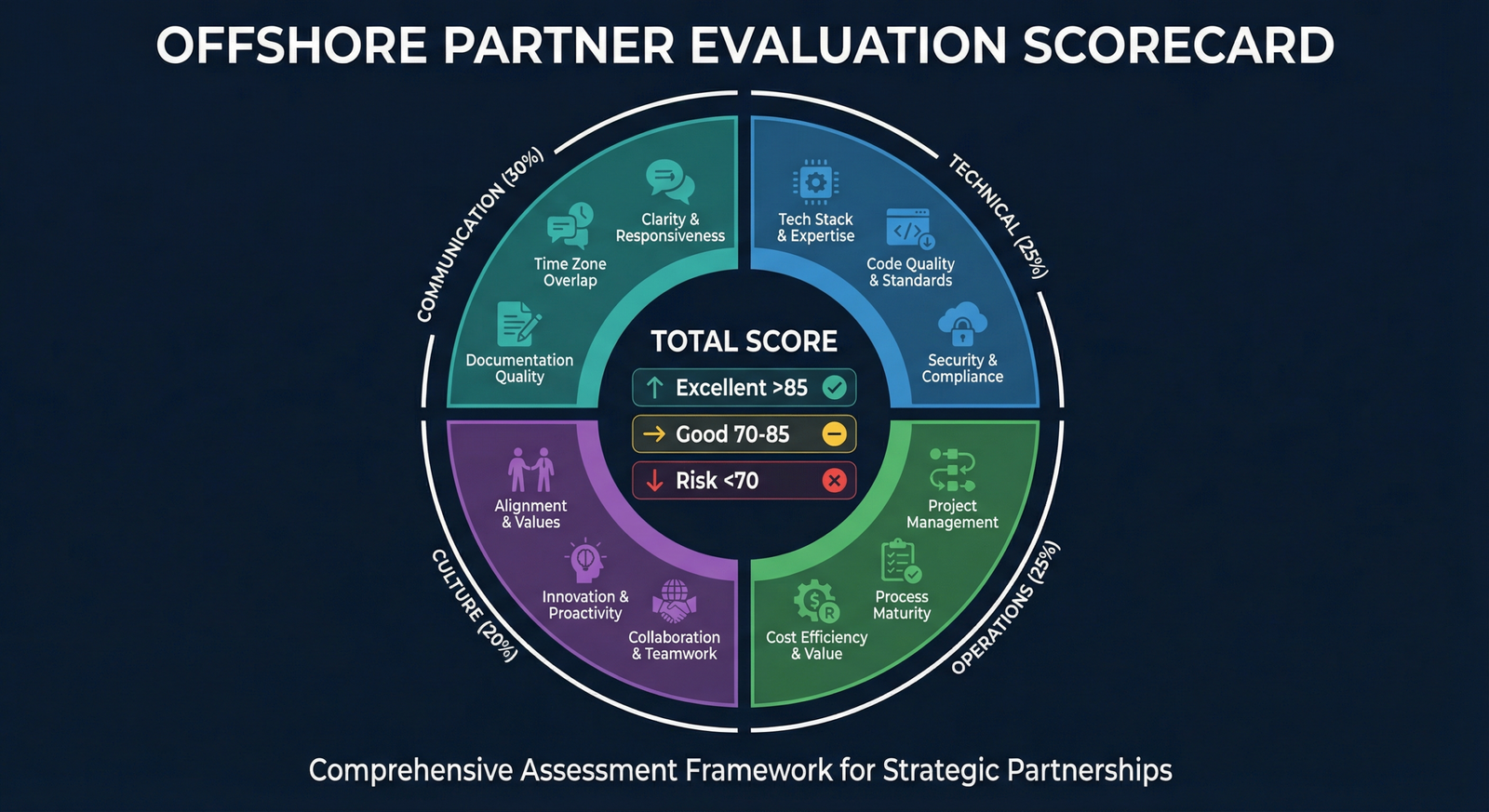

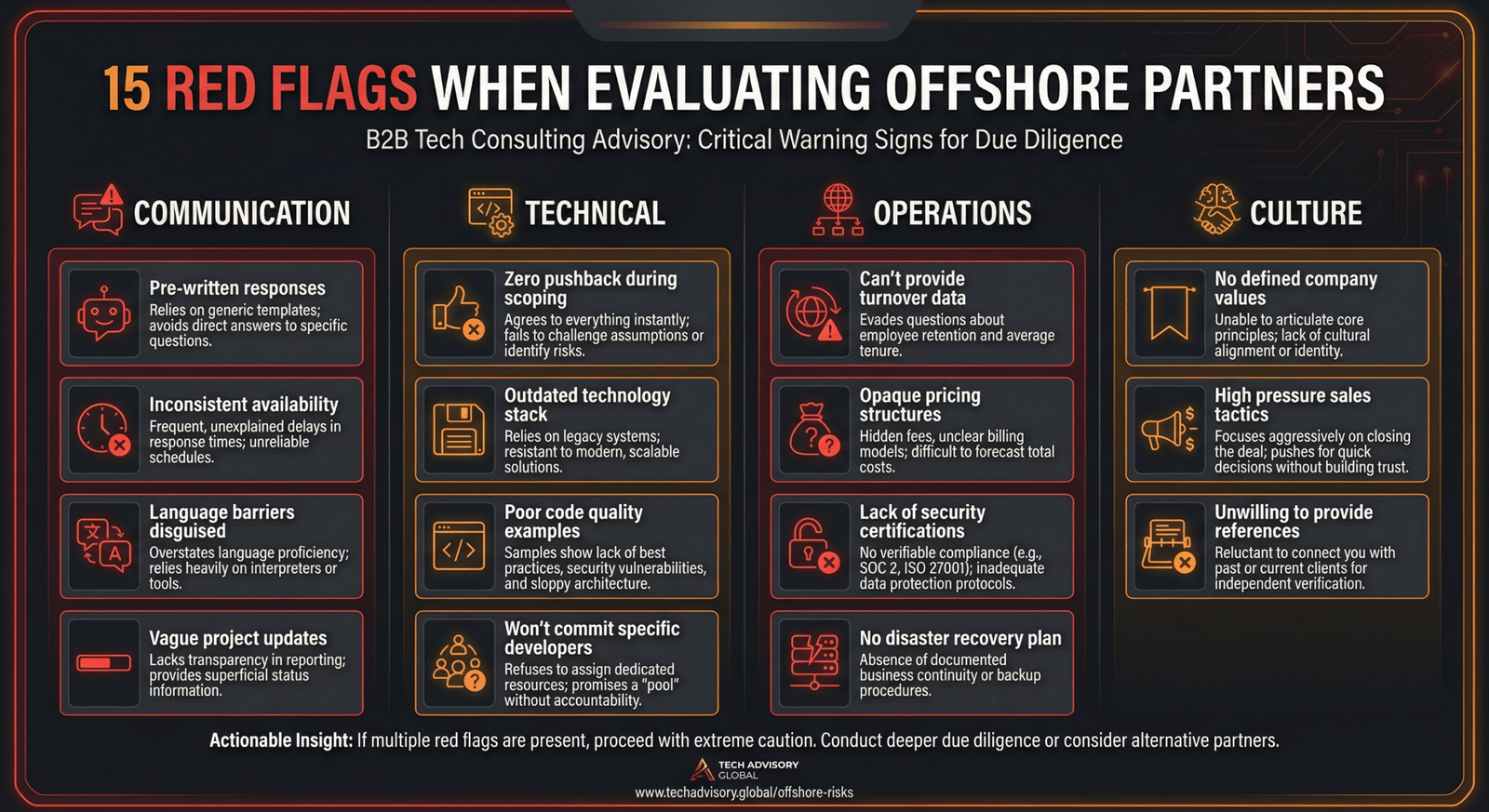

After analyzing dozens of successful (and failed) offshore engagements, we’ve distilled what actually predicts success into 15 criteria across 4 categories:

📋 The 4 Categories

- Communication & Collaboration (30%) — The #1 predictor of failure

- Technical Capability (25%) — Skills matter, but less than you think

- Operational Reliability (25%) — How they run determines what you get

- Cultural & Strategic Fit (20%) — Alignment determines long-term success

Communication & Collaboration (30%)

This is where most offshore relationships break down. Communication problems don’t just cause delays — they cause rework.

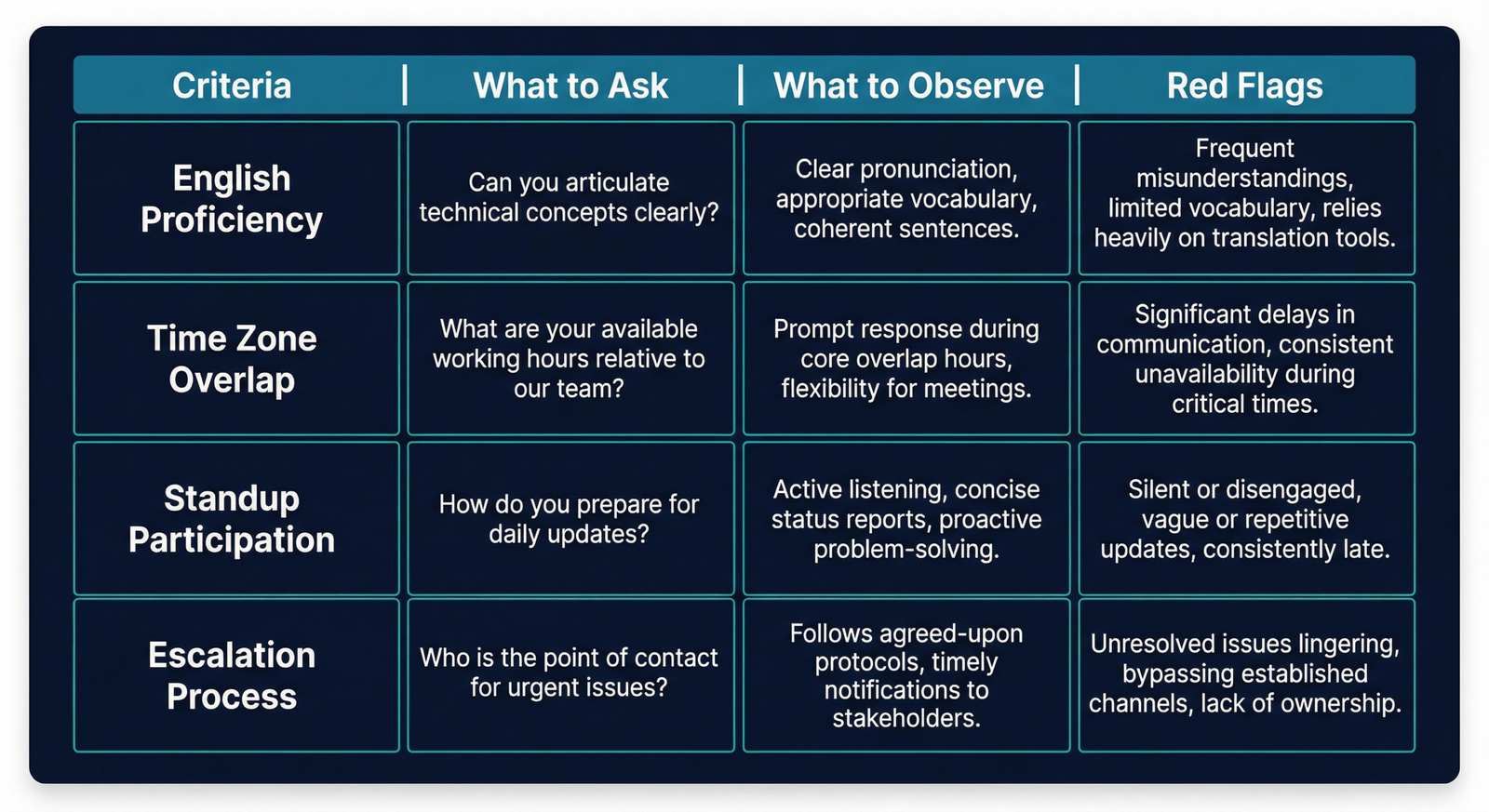

1. English Proficiency

What to look for: Complex technical explanations without confusion.

Red flag: Pre-written responses to common questions.

How to verify: Have an unscripted technical discussion.

2. Time Zone Overlap

What to look for: At least 4 hours of overlapping work time.

Red flag: Vague promises like “we’ll adjust” without specific hours.

3. Standup Participation

What to look for: Active engagement — questions, pushback, suggestions.

Red flag: Developers who read prepared statements.

4. Escalation Process

What to look for: Defined paths for raising problems.

Red flag: “We don’t have many issues.”

Technical Capability (25%)

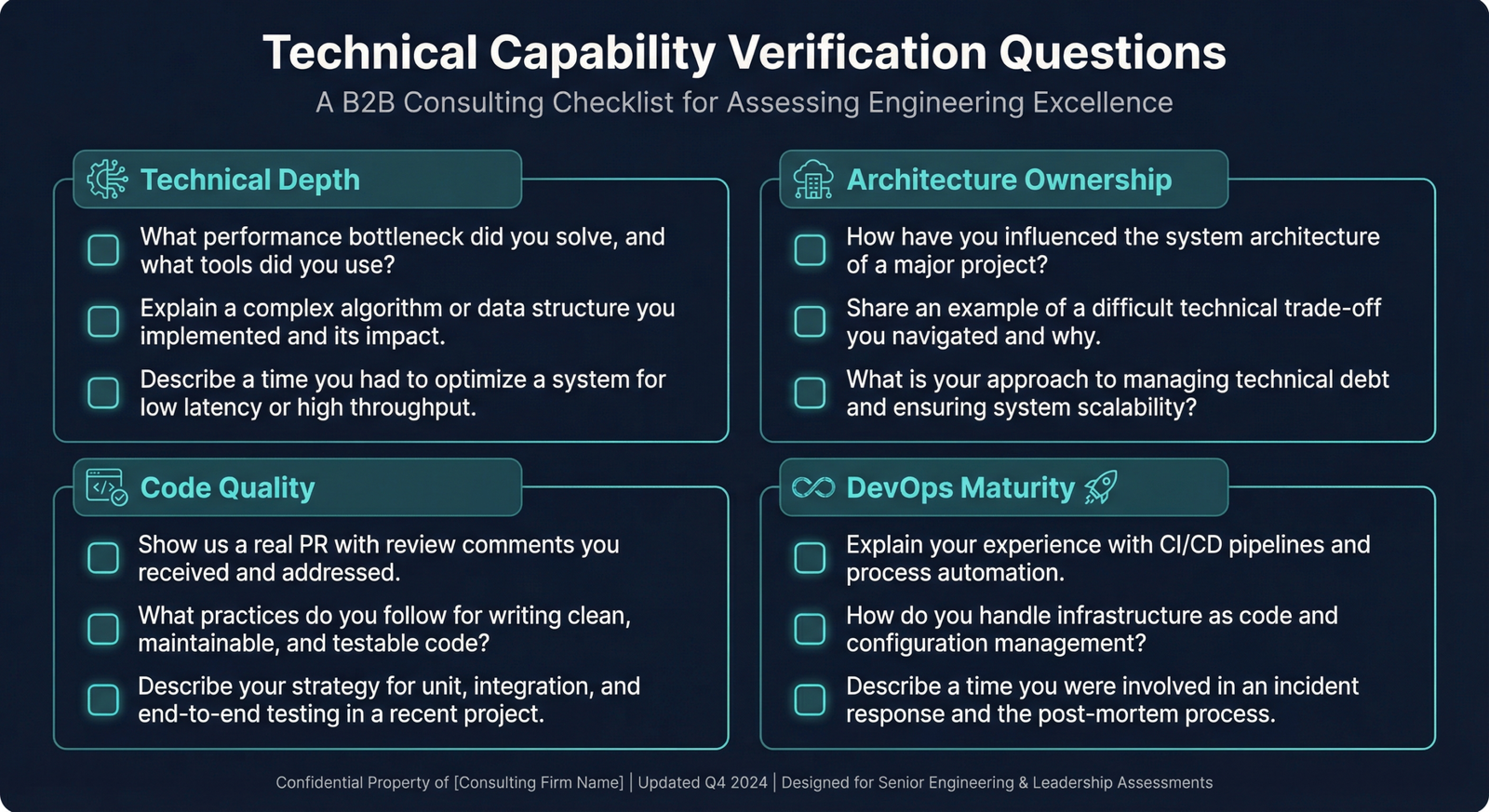

5. Technical Depth

What to look for: Performance optimization, scaling decisions, trade-offs.

Red flag: Can’t explain why they chose an approach.

6. Architecture Ownership

What to look for: Proactive suggestions, challenge assumptions.

Red flag: “Just tell us what to build.”

7. Code Quality Standards

What to look for: Code coverage targets, PR review process.

Red flag: “We test manually.”

8. DevOps Maturity

What to look for: Automated CI/CD, infrastructure as code.

Red flag: Manual deployments.

Operational Reliability (25%)

9. Team Stability

What to look for: Less than 15% annual turnover.

Red flag: Won’t provide turnover metrics.

10. Bench vs. Dedicated

What to look for: Named individuals assigned before signing.

Red flag: Won’t commit specific people.

11. Security & Compliance

What to look for: SOC 2 Type II, clear IP assignment.

Red flag: “We’re working on SOC 2.”

12. Pricing Transparency

What to look for: All-inclusive rates, clear change order process.

Red flag: Low headline rate with many “additional” items.

Cultural & Strategic Fit (20%)

13. Proactive vs. Reactive

What to look for: Suggestions during discovery.

Red flag: Zero pushback during scoping.

14. Cultural Alignment

What to look for: Ownership language.

Red flag: Blame-shifting in past project discussions.

15. Reference Quality

What to look for: Specific outcomes, enthusiasm.

Red flag: Generic praise, only positive references.

How to Score and Decide

Rate each criterion 1-5, apply category weights:

📊 Scoring Thresholds

- 85+: Excellent — move forward with confidence

- 70-84: Good — negotiate improvements on weak areas

- 55-69: Risky — proceed only if addressable

- Below 55: Walk away

The 4-Week Evaluation Timeline

- Week 1: Initial screening, RFP distribution

- Week 2: Technical deep-dives, live demos

- Week 3: Reference calls, contract review

- Week 4: Final scoring, decision

Get the Complete Scorecard Template

Download the weighted spreadsheet and use it for your next vendor evaluation.

GTCatalyst helps companies evaluate and optimize their offshore partnerships. We’ve done this assessment dozens of times — let us help you get it right the first time.